One of the main things I noticed is that it now provides two methods of uncompress. Anyway it seems most examples I found are using snappy version 6 which seems to be a bit different than version 7 (the latest). master yarn -deploy-mode client -driver-memory 512m -executor-memory 512m -conf spark.ui.port=4244 kafkaconsumer. The most complete example and very useful I found was here. To Prepare Hadoop Environment # Create HDFS Directories (also avro, orc, json. CREATE TABLE avroCompressTable( id INTEGER, compressibleAvroFile DATASET STORAGE FORMAT AVRO COMPRESS USING SNAPPYCOMPRESS DECOMPRESS USING SNAPPYDECOMPRESS). To Build this Application: sbt clean assemblyĬreate Kafka Topic cd /usr/hdp/current/kafka-brokerīin/kafka-topics.sh -create -zookeeper localhost:2181 -replication-factor 1 -partitions 1 -topic meetup We convert to a DataFrame, register it as a Temp Table and then save to ORC File, AVRO, Parquet and JSON. For example, Microsoft saves excel data in the XLSX format and SQL Server Integration Packages in the DTSX format. From there we then convert to a Scala Case Class that models the Twitter tweet from our source. The snappy compression type is supported by the AVRO. We setup the Spark Context and the Spark Streaming Context, then use the KafkaUtils to prepare a Stream of generic Avro data records from a byte array. They are based on the same underlying algorithm but they arent compatible in that you can compress with one and decompress with another.

We set a number of parameters for performance of the SparkSQL write to HDFS including enabling Tungsten, using Snappy compression, Backpressure and the KryoSerializer. The issue here is that python-snappy is not compatible with Hadoops snappy codec, which is what Spark will use to read the data when it sees a '.snappy' suffix. We consume KAFKA messages in microbatches of 2 seconds. Below are basic comparison between ZLIB and SNAPPY when to use what.

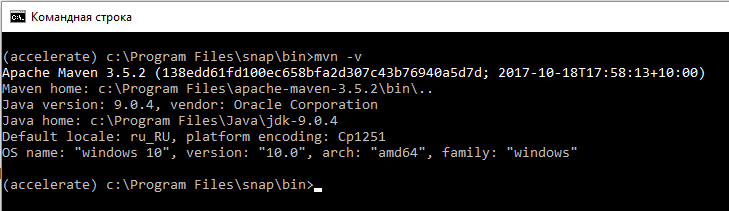

Hence, it is suggestable to use compression. Below is a short Scala program and SBT build script to generate a Spark application to submit to YARN that will run on the HDP Sandbox with Spark 1.6. Not writing ORC files in compression results in larger disk space and slower in performance.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed